Big Data Analytics | Applications

- May 8, 2019

- 59 min read

“You can have data without information, but you cannot have information without data.”

– Daniel Keys Moran

What is Big Data ?

In the current time, as the technology is developing so fast and the development is taking place at a very fast pace, there is an enormous amount of data being produced in all the fields, i.e. government, scientific research, industry etc. called as the Big Data. We need to properly study, store and process this data. With the traditional data processing tools, it is difficult to analyse and study this data. Hence, to overcome these challenges, Big Data solutions like Hadoop, were introduced.

Big data is a term for large and complex unprocessed data. This data is difficult and also time-consuming to process using the traditional processing methodologies. Big data can be characterized with :

Volume, Variety, Velocity, Variability, Veracity and Complexity.

Why do we need to analyse this Big Data ?

The primary goal of big data analytics is to help companies make more informed business decisions by enabling data scientists, predictive modelers, and other analytics professionals to analyze large volumes of transactional data, as well as other forms of data that may be untapped by more conventional Business Intelligence(BI) programs. That could include web server logs and Internet click-stream data, social media content and social network activity reports, text from customer emails and survey responses, mobile phone call detail records and machine data captured by sensors and connected to the Internet of Things.

Big Data Applications:

1. Government Sector

In government sector, the biggest challenges are the integration of big data across different government departments and affiliated organizations.

In the government sector, we need to integrate big data across different government departments and affiliated organizations.

The governments at the time of elections use this big data to check how the Indian public is responding to the Government action as well as ideas for policy implementation.

Eaxamples:

1. Obama's Successful 2012 election campaign.

2. Successful winning of the BJP in the Indian General Elections of 2014

Governments, have to face a very huge amount of data on almost daily basis. Reason being, they have to keep track of various records and databases regarding the citizens, their growth, energy resources, geographical surveys and many more. All of this data contributes to big data. The proper study and analysis of this data helps the Governments in endless ways.

Welfare schemes :

In making faster and informed decisions regarding various political programs.

To identify the areas that are in immediate need of attention.

To stay up-to-date in the field of agriculture by keeping track of all the land and livestock that exists.

To overcome national challenges such as unemployment, terrorism, energy resource exploration and more.

Cyber security:

Big Data is hugely used for deceit recognition

Governments are also finding the use of big data in catching tax evaders.

The Food and Drug Administration (FDA) which runs under the jurisdiction of the Federal Government of US leverages from the analysis of big data to discover patters and associations in order to identify and examine the expected or unexpected occurrences of food based infections.

This allows for faster response which has led to faster treatment and less death.

In public services, big data has a very wide range of applications including: energy exploration, financial market analysis, fraud detection, health related research and environmental protection.

Big data is being used in the analysis of large amounts of social disability claims, made to the Social Security Administration (SSA), that arrive in the form of unstructured data. The analytics are used to process medical information rapidly and efficiently for faster decision making and to detect suspicious or fraudulent claims.

The Department of Homeland Security uses big data for several different use cases. Big data is analyzed from different government agencies and is used to protect the country.

Big Data Providers in this industry include: Digital Reasoning, Socrata and HP

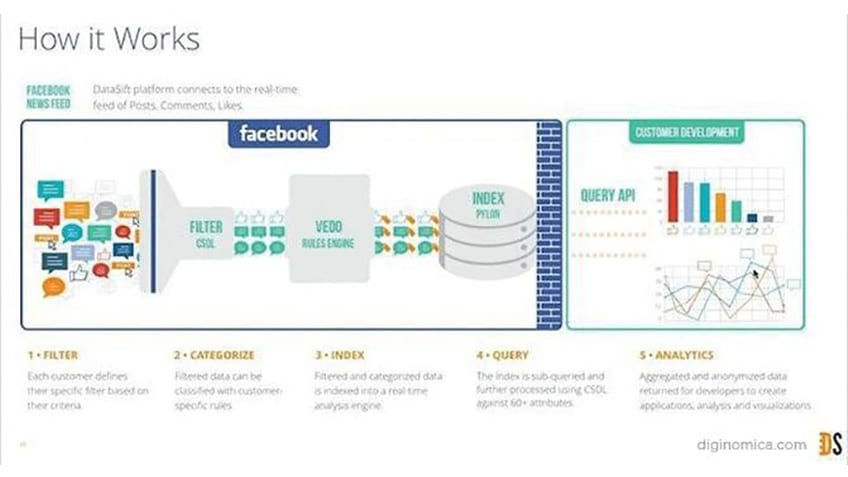

2. Social Media Analytics

Various solutions have been built in order to analyze social media activity like IBM’s Cognos Consumer Insights, a point solution running on IBM’s BigInsights Big Data platform, can make sense of the chatter.

Social media can provide valuable real-time insights into how the market is responding to products and campaigns.

With the help of these insights, the companies can adjust their pricing, promotion, and campaign placements accordingly.

Before utilizing the big data there needs to be some pre - processing to be done on the big data in order to derive some intelligent and valuable results.

Thus to know the consumer mindset the application of intelligent decisions derived from big data is necessary.

3. Communications, Media and Entertainment

With large number if people having access to digital gadgets and numerous social media platforms coming up, we can see a rise in the Big Data in Media and Entertainment Industry.

Some of the benefits extracted from the big data in media and entertainment industry:

Predicting the interests of audiences.

Optimized or on-demand scheduling of media streams in digital media distribution platforms.

Getting Insights into customer’s reviews.

Effective targeting of the advertisements for media.

Since consumers expect rich media on-demand in different formats and in a variety of devices, some big data challenges in the communications, media and entertainment industry include:

Collecting, analyzing, and utilizing consumer insights

Leveraging mobile and social media content

Understanding patterns of real-time, media content usage

Applications

Organizations in this industry simultaneously analyze customer data along with behavioral data to create detailed customer profiles that can be used to:

Create content for different target audiences

Recommend content on demand

Measure content performance

A case in point is the Wimbledon Championships (YouTube Video) that leverages big data to deliver detailed sentiment analysis on the tennis matches to TV, mobile, and web users in real-time.

Spotify, an on-demand music service, uses Hadoop big data analytics, to collect data from its millions of users worldwide and then uses the analyzed data to give informed music recommendations to individual users.

Amazon Prime, which is driven to provide a great customer experience by offering, video, music and Kindle books in a one-stop shop also heavily utilizes big data.

Big Data Providers in this industry include:

Infochimps, Splunk, Pervasive Software, and Visible Measures

4. Technology

The technological applications of big data comprise of the following companies which deal with huge amounts of data every day and put them to use for business decisions :

eBay.com uses two data warehouses at 7.5 petabytes and 40PB as well as a 40PB Hadoop cluster for search, consumer recommendations, and merchandising. Inside eBay‟s 90PB data warehouse.

Amazon.com handles millions of back-end operations every day, as well as queries from more than half a million third-party sellers. The core technology that keeps Amazon running is Linux-based and as of 2005, they had the world’s three largest Linux databases, with capacities of 7.8 TB, 18.5 TB, and 24.7 TB.

Facebook handles 50 billion photos from its user base.

Windermere Real Estate uses anonymous GPS signals from nearly 100 million drivers to help new home buyers determine their typical drive times to and from work throughout various times of the day.

5. Fraud detection

For businesses whose operations involve any type of claims or transaction processing, fraud detection is one of the most compelling Big Data application examples. In most cases, fraud is discovered long after the fact, at which point the damage has been done and all that’s left is to minimize the harm and adjust policies to prevent it from happening again. Big Data platforms that can analyze claims and transactions in real time, identifying large-scale patterns across many transactions or detecting anomalous behavior from an individual user, can change the fraud detection.

6. Call Center Analytics

What’s going on in a customer’s call center often influences market sentiment, but without a Big Data solution, much of the insight that a call center can provide will be overlooked or discovered too late. Big Data solutions can help identify recurring problems or customer and staff behavior patterns on the fly not only by making sense of time/quality resolution metrics but also by capturing and processing call content itself.

7. Banking and Securities

A study shows that the challenges in this industry include:

securities fraud early warning, tick analytics, card fraud detection, archival of audit trails, enterprise credit risk reporting, trade visibility, customer data transformation, social analytics for trading, IT operations analytics, and IT policy compliance analytics, etc.

The Securities Exchange Commission (SEC) is using big data to monitor financial market activity. They are currently using network analytics and natural language processors to catch illegal trading activity in the financial markets.

Retail traders, Big banks, hedge funds, use big data for trade analytics used in high frequency trading, pre-trade decision-support analytics, sentiment measurement, Predictive Analytics etc.

This industry also heavily relies on big data for risk analytics including; anti-money laundering, demand enterprise risk management, "Know Your Customer", and fraud mitigation.

Big Data providers specific to this industry include: 1010data, Panopticon Software, Streambase Systems, Nice Actimize and Quartet FS

The use of customer data invariably raises privacy issues. By uncovering hidden connections between seemingly unrelated pieces of data, big data analytics could potentially reveal sensitive personal information. Research indicates that 62% of bankers are cautious in their use of big data due to privacy issues. Further, outsourcing of data analysis activities or distribution of customer data across departments for the generation of richer insights also amplifies security risks, Such as customers’ earnings, savings, mortgages, and insurance policies ended up in the wrong hands. Such incidents reinforce concerns about data privacy and discourage customers from sharing personal information in exchange for customized offers.

The amount of data in banking sectors is too much. According to GDC prognosis, this data is estimated to grow 700% by 2020. Proper study and analysis of this data can help detect any and all the illegal activities that are being carried out such as:

The misuse of credit cards

Misuse of debit cards

Venture credit hazard treatment

Business clarity

Customer statistics alteration

Money laundering

Risk Mitigation

Example:

Various anti money laundering softwares such as SAS AML use data analytics for the main purpose of detecting suspicious transactions and analyzing customer data. Bank of America has been a SAS AML customer for more than 25 years.

8. Insurance

Lack of personalized services, lack of personalized pricing and the lack of targeted services to new segments and to specific market segments are some of the main challenges.

Big data has been used in the industry to provide customer insights for transparent and simpler products, by analyzing and predicting customer behavior through data derived from social media, GPS-enabled devices and CCTV footage. The big data also allows for better customer retention from insurance companies.

Big Data Providers in this industry include: Sprint, Qualcomm, Octo Telematics, The Climate Corp.

9. Agriculture

A biotechnology firm uses sensor data to optimize crop efficiency. It plants test crops and runs simulations to measure how plants react to various changes in condition. Its data environment constantly adjusts to changes in the attributes of various data it collects, including temperature, water levels, soil composition, growth, output, and gene sequencing of each plant in the test bed.

10. Marketing

Marketers have begun to use facial recognition software to learn how well their advertising succeeds or fails at stimulating interest in their products. A recent study published in the Harvard Business Review looked at what kinds of advertisements compelled viewers to continue watching and what turned viewers off. Among their tools was “a system that analyses facial expressions to reveal what viewers are feeling.” The research was designed to discover what kinds of promotions induced watchers to share the ads with their social network, helping marketers create ads most likely to “go viral” and improve sales.

11. Smart Phones

People now carry facial recognition technology in their pockets. Users of I Phone and Android smartphones have applications at their fingertips that use facial recognition technology for various tasks.

For example, Android users with the remember app can snap a photo of someone, then bring up stored information about that person based on their image when their own memory lets them down a potential boon for salespeople.

12. Telecom

Operators face a challenge when they need to deliver new, compelling, revenue-generating services without overloading their networks and keeping their running costs under control. The market demands new set of data management and analysis capabilities that can help service providers make accurate decisions by taking into account customer, network context and other critical aspects of their businesses. Most of these decisions must be made in real time, placing additional pressure on the operators. Real-time predictive analytics can help leverage the data that resides in their multitude systems, make it immediately accessible and help correlate that data to generate insight that can help them drive their business forward.

13. Healthcare

Traditionally, the healthcare industry has lagged behind other industries in the use of big data, part of the problem stems from resistance to change providers are accustomed to making treatment decisions independently, using their own clinical judgment, rather than relying on protocols based on big data. Other obstacles are more structural in nature. Even within a single hospital, payor, or pharmaceutical company, important information often remains siloed within one group or department because organizations lack procedures for integrating data and communicating findings. Health care stakeholders now have access to promising new threads of knowledge. This information is a form of “big data,” so called not only for its sheer volume but for its complexity, diversity, and timelines. Pharmaceutical industry exports, payers, and providers are now beginning to analyze big data to obtain insights. Recent technologic advances in the industry have improved their ability to work with such data, even though the files are enormous and often have different database structures and technical characteristics.

Now healthcare is yet another industry which is bound to generate a huge amount of data. Following are some of the ways in which big data has contributed to healthcare

Big data reduces costs of treatment since there is less chances of having to perform unnecessary diagnosis.

It helps in predicting outbreaks of epidemics and also helps in deciding what preventive measures could be taken to minimize the effects of the same.

It helps avoid preventable diseases by detecting diseases in early stages and prevents it from getting any worse which in turn makes the treatment easy and effective.

Patients can be provided with the evidence based medicine which is identified and prescribed after doing the research of past medical results.

Example:

Wearable devices and sensors have been introduced in healthcare industry which can provide real time feed to the electronic health record of a Patient. One such technology is from Apple.

Apple has come up with what they call Apple HealthKit, CareKit and ResearchKit. The main goal is to empower the iPhone users to store and access their real time health records on their phones.

Industry-Specific challenges

The healthcare sector has access to huge amounts of data but has been plagued by failures in utilizing the data to curb the cost of rising healthcare and by inefficient systems that stifle faster and better healthcare benefits across the board.

This is mainly due to the fact that electronic data is unavailable, inadequate, or unusable. Additionally, the healthcare databases that hold health-related information have made it difficult to link data that can show patterns useful in the medical field.

Other challenges related to big data include: the exclusion of patients from the decision making process, and the use of data from different readily available sensors.

Applications of big data in the healthcare sector

Some hospitals, like Beth Israel, are using data collected from a cell phone app, from millions of patients, to allow doctors to use evidence-based medicine as opposed to administering several medical/lab tests to all patients who go to the hospital. A battery of tests can be efficient but they can also be expensive and usually ineffective.

Free public health data and Google Maps have been used by the University of Florida to create visual data that allows for faster identification and efficient analysis of healthcare information, used in tracking the spread of chronic disease.

Obamacare has also utilized big data in a variety of ways.

Big Data Providers in this industry include: Recombinant Data, Humedica, Explorys and Cerner

14. Education

Education Industry is flooding with a huge amount of data related to students, faculties, courses, results and what not. It was not long before we realized that the proper study and analysis of this data can provide insights that can be used to improve the operational effectiveness and working of educational institutes.

Following are some of the fields in education industry that have been transformed by big data motivated changes

Customized and dynamic learning programs:

Customized programs and schemes for each individual can be created using the data collected on the bases of a student’s learning history to benefit all students. This improves the overall student results

Reframing course material:

Reframing the course material according to the data that is collected on the basis of what student learns and to what extent by real time monitoring of what components of a course are easier to understand.

Grading Systems:

New advancements in grading systems have been introduced as a result of proper analysis of student data.

Career prediction:

Proper analysis and study of every student’s records will help in understanding the student’s progress, strengths, weaknesses, interests and more. It will help in determining which career would be most appropriate for the student in the future.

The applications of big data have provided a solution to one of the biggest pitfalls in the education system, that is, the one-size-fits-all fashion of academic set up, by contributing in e-learning solutions.

Example:

The University of Alabama has more than 38000 students and an ocean of data. In the past when there were no real solutions to analyze that much data, some of that data seemed useless. Now administrators are able to use analytics and data visualizations for this data to draw out patters with students revolutionizing the university’s operations, recruitment and retention efforts.

Industry-Specific big data challenges

From a technical point of view, a major challenge in the education industry is to incorporate big data from different sources and vendors and to utilize it on platforms that were not designed for the varying data.

From a practical point of view, staff and institutions have to learn the new data management and analysis tools.

On the technical side, there are challenges to integrate data from different sources, on different platforms and from different vendors that were not designed to work with one another.

Politically, issues of privacy and personal data protection associated with big data used for educational purposes is a challenge.

Applications of big data in Education

Big data is used quite significantly in higher education. For example, The University of Tasmania. An Australian university with over 26000 students, has deployed a Learning and Management System that tracks among other things, when a student logs onto the system, how much time is spent on different pages in the system, as well as the overall progress of a student over time.

In a different use case of the use of big data in education, it is also used to measure teacher’s effectiveness to ensure a good experience for both students and teachers. Teacher’s performance can be fine-tuned and measured against student numbers, subject matter, student demographics, student aspirations, behavioral classification and several other variables.

On a governmental level, the Office of Educational Technology in the U. S. Department of Education, is using big data to develop analytics to help course correct students who are going astray while using online big data courses. Click patterns are also being used to detect boredom.

Big Data Providers in this industry include: Knewton and Carnegie Learning and MyFit/ Naviance

15. Big data in weather patterns

There are weather sensors and satellites deployed all around the globe. A huge amount of data is collected from them and then this data is used to monitor the weather and environmental conditions.

All of the data collected from these sensors and satellites contributes to big data and can be used in different ways such as:

In weather forecast

To study global warming

Understanding the patterns of natural disasters

To make necessary preparations in case of crisis

To predict the availability of usable water around the world.

Example:

IBM deep thunder which is a research project by IBM, provides weather forecasting through high performance computing of big data. IBM is also assisting Tokyo with the improved weather forecasting for natural disasters or probability of damaged power lines in order to plan successful 2020 Olympics.

16. Transportation

Since the rise of big data, it has been used in various ways to make transportation more efficient and easy. Following are some of the areas where big data contributed to transportation.

Route planning: Big data can be used to understand and estimate the user’s needs on different routes and on multiple modes of transportation and then utilizing route planning to reduce the users wait times.

Congestion management and traffic control: Using big data, real time estimation of congestion and traffic patterns is now possible. For examples, people using Google Maps to locate the least traffic prone routes.

Safety level of traffic: Using the real time processing of big data and predictive analysis to identify the traffic accidents prone areas can help reduce accidents and increase the safety level of traffic.

Example

Let’s take Uber for an example here, Uber generates and uses a huge amount of data regarding drivers, their vehicles, locations, every trip from every vehicle etc. All of this data is analyzed and then used to predict the supply, demand, location of the drivers and the fares that will be set for every trip.

And guess what? We too make use of this application when we plan route to save fuel and time, based on our knowledge of having taken that particular route sometime in the past. In this case we analyzed and made use of the data that we had previously acquired on account of our experience and then we used it to make a smart decision. It’s pretty cool that big data has played parts not only in such big fields but also in our smallest day to day life decisions too, isn’t it?

Industry-Specific challenges

In recent times, huge amounts of data from location-based social networks and high speed data from telecoms have affected travel behavior. Regrettably, research to understand travel behavior has not progressed as quickly.

In most places, transport demand models are still based on poorly understood new social media structures.

Applications of big data in the transportation industry

Some applications of big data by governments, private organizations and individuals include:

Governments use of big data: traffic control, route planning, intelligent transport systems, congestion management (by predicting traffic conditions)

Private sector use of big data in transport: revenue management, technological enhancements, logistics and for competitive advantage (by consolidating shipments and optimizing freight movement)

Individual use of big data includes: route planning to save on fuel and time, for travel arrangements in tourism etc.

Big Data Providers in this industry include: Qualcomm and Manhattan Associates

17. Energy and Utilities

Applications of big data in the energy and utilities industry

Smart meter readers allow data to be collected almost every 15 minutes as opposed to once a day with the old meter readers. This granular data is being used to analyze consumption of utilities better which allows for improved customer feedback and better control of utilities use.

In utility companies the use of big data also allows for better asset and workforce management which is useful for recognizing errors and correcting them as soon as possible before complete failure is experienced.

Big Data Providers in this industry include: Alstom Siemens ABB and Cloudera

Big Data Is Making Fast Food Faster

The first of our big data examples is in fast food. You pull up to your local McDonald’s or Burger King, and notice that there’s a really long line in front of you. You start drumming your fingers on the wheel, lamenting the fact that your “fast food” excursion is going to be anything but, and wondering if you should drive to the Wendy’s a block away instead.

However, before you have time to think about your culinary crisis too deeply, you notice that a few cars ahead of you have already gone through. The line is moving much quicker than expected… what gives? You shrug it off, drive up to the window, and place your order.

Behind the scenes

What you may not have realized is that big data has just helped you to get your hands on those fries and burgers a little bit earlier. Some fast food chains are now monitoring their drive-through lanes and changing their menu features (you know, the ones on the LCD screen as opposed to the numbers on the board) in response. Here’s how it works: if the line is really backed up, the features will change to reflect items that can be quickly prepared and served so as to move through the queue faster. If the line is relatively short, then the features will display higher margin menu items that take a bit more time to prepare.

Now that’s some smart fast food.

Self-serve Beer And Big Data

Another great big data example in real life. You walk into your favorite bar. The bartender, instead of asking you, “What’ll you have?” hands you a little plastic card instead.

“Uhhh… what’s this?” you ask. He spreads his hands. “Well, the folks upstairs wanted to try out this new system. Basically, you pour all your own beer – you just swipe this card first.”

Your eyebrows raise. “So, basically, I am my own bartender from now on?”

The bartender snorts and shakes his head. “I mean, I’ll still serve you if you’d like. But with this system, you can try as little or as much of a beer as you want. Want a quarter glass of that new IPA you’re not sure about? Go right ahead. Want only half a glass of stout because you’re a bit full from dinner? Be my guest. It’ll all get automatically added to your tab and you pay for it at the end, just like always.”

You nod, starting to get the picture. “And if I want to mix two different beers together… ”

“No,” the bartender says. “Never do that.”

Behind the scenes

You might think this scenario is from some weird beer-based science fiction book, but in reality, it’s already happening. An Israeli company by the name of Weissberger has enabled self-serve beer through two pieces of equipment:

“Flow meters” which are attached to all the taps/kegs in the bar

A router that collects all this flow data and sends it to the bar’s computer

By using this system, a lot of cool things can be made possible. For example, you can let customers pour their own beer in a “self-serve” style fashion. However, there are other profitable possibilities as well that come from the use of big data. Bar owners can use these flow meters to see which beers are selling when, according to the time of day, the day of the week, and so on. Then, they can use this data to create specials that take advantage of customer behavior.

They can also use this data to:

Order new kegs at the right time, since they know more accurately how much beer they are serving

See if certain bartenders are more “generous” with their pours than others

See if certain bartenders are giving free pours to themselves or their buddies

An article titled “Using Big Data to Brew Profits One Pint at a Time” showcases the results. In Europe, the brewing company Carlsberg found that 70% of their beer sold in city bars was bought between 8-10 pm, while only 40% of their beer sold in suburban bars was bought in that time period. Using this data, they could develop market-specific prices and discounts.

Carlsberg also found that when customers were given a magnetic card and allowed to self-pour beer, they ended up consuming 30% more beer than before. This increased consumption came from customers trying small amounts of beer that they wouldn’t have bought before when they were limited to buying a full pint or larger.

Consumers Are Deciding The Overall Menu

Have you ever seen one of those marketing campaigns companies use where consumers help them “pick the next flavor?” Doritos and Mountain Dew have both used this strategy with varying levels of success. However, the underlying philosophy is sound: let the customers pick what they want, and supply that!

Well, big data is letting customers speak even more directly (without having to go to a web page). An article titled “The Big Business of Big Data” examines some of the possibilities.

Behind the scenes

One of our big data analytics examples is that of Tropical Smoothie Cafe. In 2013, they took a slight risk and introduced a veggie smoothie to their previously fruit-only smoothie menu. By keeping track of their data, Tropical Smoothie Cafe found that the veggie smoothie was soon one of their best sellers, and they introduced other versions of vegetable smoothies as a result.

Things get deeper: Tropical Smoothie Cafe was able to use big data to see at what times during the day consumers were buying the most vegetable smoothies. Then, they could use time-specific marketing campaigns (such as “happy hours”) to get consumers in the door during those times.

Big Data Makes Your Next Casino Visit More Fun

Another interesting use of big data examples in real life is with casinos. You walk into the MGM Grand in Las Vegas, excited for a weekend of gambling and catching up with old friends. Immediately, you notice a change. Those slot machines that you played endlessly on your last visit have moved from their last spot in the corner to a more central location right at the entrance. Entranced by fond memories of spinning numbers and free drinks, you walk right on over.

Behind the scenes

“Our job is to figure out how to optimize the selection of games so that people have a positive experience when they walk through the door… We can understand how games perform, how well they’re received by guests and how long they should be on the floor.”

This quote is from Lon O’Donnell, MGM’s first-ever director of corporate slot analytics. An article titled “Casinos Bet Large with Big Data” expands on how MGM uses data analysis tools to measure performance and make better business decisions. Think about business from a casino’s point of view for a moment. Casinos have an interesting relationship with their customers. Of course, in the long run, they want you to lose more money than you win – otherwise, they wouldn’t be able to make a profit. However, if you lose a large amount of money in any one visit, you might have such a bad experience that you stop going altogether… which is bad for the casino. On the flip side, they also want to avoid situations where you “hit it big”, as that costs them a lot of money.

Basically, the ideal situation for a casino is when you lose more than you win over the long run, but you don’t lose a horrendous amount in any one visit. Right now, MGM is using big data to make sure that happens. By analyzing the data from individual slot machines, for example, they can tell which machines are paying out what, and how often.

They can also tell things like:

Which machines aren’t being played and need to be replaced or relocated

Which machines are the most popular (and at what times)

Which areas of the casino pull in the most profits (and which areas need to be rearranged

In The Restaurants

This particular real-life data example applies to restaurants. Imagine this: you’re relaxing at home, trying to decide which restaurant to eat at with your spouse. You live in NYC and work long hours, and there are just so many options. The decision is taking a bit longer than it should; you’ve had a long week and your brain is fried.

Suddenly, an email arrives in your inbox. Delaying your food choices for a moment (and ignoring the withering glare of your spouse as you zone out of the conversation) you see an email from Fig & Olive, your favorite Mediterranean joint that you were a regular at but haven’t been able to visit in more than a month. The subject line says “We Miss You!” and when you open it, you’re greeted with a message that communicates two points:

Fig & Olive is wondering why you haven’t been in for a while.

They want to give you a free order of crostini because they just miss you so much!

“Honey”, you exclaim, “I know where we’re going!”

Behind the scenes

The 7-unit NY-based Fig & Olive has been using guest management software to track their guests ordering habits and to deliver targeted email campaigns. For example, the “We Miss You!” campaign generated almost 300 visits and $36,000 in sales – a 7 times return on the company’s investment into big data.

The MagicBand

The MagicBand is almost as whimsical as it sounds as it’s a data-driven innovation that’s been pioneered by the ever-dreamy Disneyland.

Now, imagine visiting a Disneyland park with your friend, partner, or children and each being given a wrist device on entry – one that provides you with key information on queuing times, entertainment start times, and suggestions tailored for you by considering your personality and your preferences. Oh, and one of your favorite Disney mascots greeting you by name. It would make your time at the park all the more, well, magical, right?

Behind the scenes:

With an ever-growing roster of adrenaline-pumping rides, refreshment stands, arcades, bars, restaurants, and experiences within its four walls – and some 150 million peoplevisiting its various parks every year – this hospitality brand uses big data to enhance its customer experience and remain relevant in a competitive marketplace.

Developed with RFID technology, the MagicBand interacts with thousands of sensors strategically placed around its various amusement parks, gathering colossal stacks of big customer data and processing it to not only significantly enhance its customer experience, but gain a wealth of insights that serve to benefit its long-term business intelligence strategy, in addition to its overall operational efficiency – truly a big data testament to the power of business analytics tools in today’s hyper-connected world.

Checking In And Out With Your Smartphone

These days, a great deal of us humans are literally glued to our smartphones. While once solely developed for the making and receiving of calls and basic text messages, today’s telecommunication offerings are essentially miniature computers, processing streams of big data and breaking down geographical barriers in the process.

When you go to a hotel, often you’re excited, meaning you’ll want to check into your room, freshen up and enjoy the facilities, or head out and explore. However, sluggish service and long queues can end up seriously eating into your time. Moreover, once you have made it past the check-in desk, you run the risk of losing your key – creating a costly and inconvenient nightmare.

That said, what if you could use your smartphone as your key, and what if you could check-in and out autonomously, order room service and pre-order drinks and services through a mobile app. Well, you can at Hilton hotels.

Behind the scenes:

At the end of 2017, the acclaimed hotel brand rolled out its mobile key and service technology to 10 of its most prominent UK branches, and due to its success, this innovation has spread internationally and will be included in its portfolio of 4,000 plus in the near future. In addition to making the hotel hospitality experience more autonomous, the insights collected through the application will help make the hotel’s consumer drinking and dining experience more bespoke.

This cutting-edge big data example from Hilton highlights the fact that by embracing the power of information as well as the connectivity of today’s digital world, it’s possible to transform your customer experience and communicate your value proposition across an almost infinite raft of new consumer channels.

And, as things develop, we expect to see more hotels, bars, pubs and restaurants utilizing this technology in the not so distant future.

A Nostalgic Shift

Amusement arcades were all the rage decades ago but due to the evolution of digital gaming, many traditional entertainment centers outside the bright lights of Sin City simply couldn’t compete with immersive consoles, resulting in a host of closures.

But with a sprinkling of nostalgic and the perfect coupling of old and new you might have noticed that the amusement arcade is having somewhat of a renaissance. It seems that those who grew up in a time where arcades reigned supreme are craving a nostalgic trip down memory lane, taking their children for good old retro family experiences. You might have also noticed, if you’re one of those people, that while there are all of the offerings you remember as a child, there are a sprinkling of cutting-edge new amusements and tech-driven developments that make the whole experience more fun, fluid, and easy to navigate.

Behind the scenes:

A shining example an amusement arcade chain that has stood the test of time is an Australian brand named Timezone.

By leveraging the big data available to the business, Timezone gained invaluable insights on customer spending habits, visitation times, preferred amusement and geographical proximity to their various branches. By gathering this information, the brand has been able to tailor each branch to its local customers while capitalizing on consumer trends to fortify its long-term strategy.

Speaking to BI Australia, Timezone’s Kane Fong, explained:

“By leveraging the big data available to the business, Timezone gained invaluable insights on customer spending habits, visitation times, preferred amusement and geographical proximity to their various branches. In gathering this information, the brand has been able to tailor each branch to its local customers while capitalizing on consumer trends to fortify its long-term business strategy.”

Big data is changing the way we eat, drink, play and gamble in ways that make our lives as consumers easier, more personal, and more entertaining.

What’s even more amazing is that we’re only at the beginning of the adoption of big data in the hospitality and entertainment industries. And as we as humans evolve the way we gather, organize, and analyze data, more incredible examples of big data will emerge in the near and distant future. We are living in exciting times.

The Power of Big Data in American Football

You guessed it right: today we’ll talk about the exciting topic of the moment, the LI (51st) edition of the Super Bowl! On Sunday, February 5th, Houston will see the New England Patriots challenging the Atlanta Falcons, in a final always highly followed by millions of Americans. The Datapine took the opportunity to have a closer look at the role of analytics in American football, how the IoT and predictive analytics are changing the game and what Big Data brings to that sport industry. Don’t forget to check out our charts and tables at the end of the article to have a feeling of the hugeness of the Super Bowl and its evolution!

From The Origins Till Today: A Monumental Growth

On January 15, 1967 the Green Bay Packers took on the Kansas City Chiefs at the Los Angeles Memorial Coliseum in what is now deemed Super Bowl I. The Green Bay Packers beat the Kansas City Chiefs, 35-10. While the end goal of pummeling each other while attempting to score touchdowns hasn’t changed much, the NFL has evolved greatly since that warm LA day.

Since the first final played in 1967, popularity has grown: in the past 51 years Super Bowl viewership has grown from 60 million to upwards of 115 million. Even more shocking, the cost of a 30-second commercial has skyrocketed from $42K to over $5 million. Not to mention that even if the basic tickets can range from $800 to $2500, the average resale/second market price is beyond imagination: this year’s current average resaleprice is $5,216, while the most expensive currently on the market sold as a “package” (with pre-parties, transportation and other perks) is close to $12,750. Excite.com draws up a table with the previous editions’ shocking resale price. In parallel, include the growth of the league and all the rules, equipment, and style of play changes and you have a much different game. For football fans, none of this may be news. What may be surprising is that one of the biggest recent changes and possible disrupter is the role of big data in American football.

Sports statistics in Football isn’t new. Player and team stats were collected and analyzed during that first Super Bowl game in LA. But the big data and data visualization revolution is shaking things up as usual. The past couple years have seen a vast improvement in data collection techniques and abilities across industries and sports. American Football is not different. A little late to the game compared to Moneyball inspired baseball, over the last few years the NFL has released the power of big data.

Football And The Internet of Things

The Internet of Things is bringing big changes to american football as well. In 2014 the NFL partnered with Zebra Technologies to place RFID chip in the left and right shoulder pads of the NFL players. Each stadium then has 20 radio receivers which are strategically installed in the lower and upper levels of the stadiums to pick up player frequencies and collect the data.

The patented RFID player-tracking technology system, The Zebra Sports Solution, provides real-time metrics on players’ speed, acceleration, deceleration, distance traveled and alignment, which is part of the NFL’s “Next Gen Stats.” Additionally, the data captures where players are on the gridiron, their direction and how their speed or acceleration impacts their on-field performance.

In the partnership’s first year, 17 stadiums installed the technology. Now as the partnership rolls into its third season all 31 stadiums are on board. The NFL will now offer each team the data generated by the Zebra Sports Solution in order to better evaluate athlete performance, as it sees the vast potential of big data in American football. In a 2016 press release Vishal Shah, NFL’s Vice President of Digital Media stated: “we are excited for the 2016 season and for the tracking technology to help teams evolve training, scouting and evaluation through increased knowledge of player performance as well as to provide ways for our teams and partners to enhance the fan experience.”

Michael King, Zebra’s director of sports products, asserted: “Football is more of a chess match. This technology is huge for that.” For football coaches and staff that spend hundreds of hours pouring over stats and tape, powerful analytics run on big data can help save time and power effective decision making. Zebra is also providing data visualization to help drive insights. But all this data isn’t just for NFL staff. The NFL sees a possible business in the data as well. “The best fans are the most engaged fans,” King said before shifting to the potential financial windfall. “They will pay for subscription plans to get this data.” Fan engagement is already starting. Microsoft Xbox One can access the collected data through NFL’s Next Gen Stats features.

Data tracking doesn’t stop with the players. Zebra has partnered with Wilson Sporting Goods to install RFID sensors in the footballs as well. These sensors enabled footballs were used in the 2016 preseason. As of now, the sensors do not measure pressure (cf. Deflategate), but the the data would be used for research that could spark significant changes in officiating, kicking and other areas as soon as the 2017 season.

Protecting The Players With Big Data

It is no secret that the safety of the game can be improved, specifically by preventing, diagnosing and treating head injuries. In this vein 20 college football programs have installed helmet sensors to monitor concussions and collect data. As usual with the NFL and concussions there is controversy and as of now helmet sensors won’t be used to monitor concussions. But as the technology improves and the landscape changes there is hope that big data will be used to protect NFL players when it comes to head injuries.

Luckily data is already helping with other injuries. Darryl Lewis, Chief Technology Officer at optical tracking systems company STATS LLC was at Microsoft running the Xbox game division when the company’s software noted a slight movement bobble with an NBA player during a motion-capture session. “We noticed he was favoring his left side and pointed it out, and when he got checked out by a doctor they noticed a very slight hairline fracture in a foot bone,” says Lewis. Had the injury gone unchecked, it might have become much more complicated and career impacting.

Real-Time Data On The Sidelines

Microsoft and NFL partnered in 2013 to make the Microsoft Surface the official tablet of the NFL. It has been a bit of a PR nightmare. After various connectivity issues New England Patriots coach Bill Belichick Microsoft Surfaces has declared he is done with the technology. That said the tablets allow coaches to demonstrate and review plays on the sidelines, as well as access real-time data from the NFL’s databanks. If some of the sideline technology could be fine-tuned the access to big data could positively impact the game.

Gaming, Big Data, And Football

Injury prevention isn’t the only real life intersection of the gaming world and an actual football game. The football video game “Madden NFL” uses more than 60 data points on each individual player to power its game simulations, including information about injuries. At the end of the regular season, the engineers input the new data about the competing teams and run a final simulation. All this data led to Madden NFL correctly predicting 2015’s Super Bowl XLIX. It predicted the New England Patriots would beat the Seattle Seahawks 28-24 and that Patriots quarterback Tom Brady would throw four touchdown passes and be chosen the game’s most valuable player. And that the game-winning touchdown catch would be made by Julian Edelman. The game has correctly predicted the winner in 9 out of the last 12 Super Bowls. And it is getting better. As its data increases, its accuracy will as well.

Predictive Analytics And Football

Madden NFL isn’t the only software tapping into big data to predict NFL game outcomes. Prediction Machine was created by Varick Media Management to predict how a game will end. As its data increases so does its insights, and is currently boasting a 48-20 (70.6%) ATS in the NFL postseason all-time. Then there is the tech startup Unanimous A.I. with their swarm artificial intelligence platform known as UNU. UNU correctly predicted the entire outcome of the last Kentucky Derby, down to each horse. However, for some of the swarm real-time questions asked over the past few months, UNU predicted the Green Bay Packers would take home this year’s Super Bowl trophy. Unfortunately for the Packers, as we know that is not going to happen since the two finalists for this LI edition are the New England Patriots and the Atlanta Falcons.

In spite of few mistakes and rare errors, all of this data stays on course. Big Data also helps to fuel predictions in the $70 billion dollar fantasy football industry.

One Decade of Super Bowl

Let us have a closer look now to the various statistics we can gather around that annual monumental event and how some of its data evolved over time. All charts are created with datapine’s data visualization software.

TV audience VS. price of a commercial

The number of americans turning on their TV rose by 20% over the past eleven years, to reach almost 112 millions viewers for the last edition. In comparison, the price of a commercial doubled, going from $2,5 millions to $5 millions for 30 seconds of the audience’s attention.

Stadium attendance VS. price of a ticket

The stadium attendance is annually stable, since most of the football stadiums accross the US has a similar size and is every year full-packed. The only slight difference occurred in 2011 when Dallas’s Jerry Jones wanted to break the attendance record but fell short by almost nothing. The tickets price however, have been multiplied by 4 in only 10 years!

Finalists of the Super Bowl

Three teams appeared three times since 2006, three others twice while the seven left only once.

Super Bowl winners

To nuance a bit the previous chart, the following table provides the success rate of each team who managed to make it to the final. The best success rate since 2006 goes to the New York Giants, who won the two finals they played.

Big Data and American Football

On Sunday February 5 in Houston, Texas the National Football League (NFL) season reaches its pinnacle: Superbowl LI. Over 100 million people will tune in over greasy food and maybe a beer or two to watch the best team in the National Football Conference (NFC) and the best in the American Football Conference (AFC) face off for fame, glory, and a lot of money. Or they may be tuning in to watch Lady Gaga perform or see which 5-million-dollar commercial is the best this year. Either way at the same time real-time and historical data will be driving every aspect of the football game, advertisements, predictions and gambling. It’s official, the era of big data and American football is here.

Stay tuned as next week we come back with a special article analyzing the different stats for each finalist team, comparing their strengths and weaknesses, and our bet on who will lift the trophy this year between the New England Patriots and the Atlanta Falcons!

12 Examples of Big Data Analytics In Healthcare That Can Save People

Big Data has changed the way we manage, analyze and leverage data in any industry. One of the most promising areas where it can be applied to make a change is healthcare. Healthcare analytics have the potential to reduce costs of treatment, predict outbreaks of epidemics, avoid preventable diseases and improve the quality of life in general. Average human lifespan is increasing along world population, which poses new challenges to today’s treatment delivery methods. Health professionals, just like business entrepreneurs, are capable of collecting massive amounts of data and look for best strategies to use these numbers. In this article, we would like to address the need of big data in healthcare: why and how can it help? What are the obstacles to its adoption? We will then provide you with 12 big data examples in healthcare that already exist and that we benefit from.

What Is Big Data In Healthcare?

The application of big data analytics in healthcare has a lot of positive and also life-saving outcomes. Big data refers to the vast quantities of information created by the digitization of everything, that gets consolidated and analyzed by specific technologies. Applied to healthcare, it will use specific health data of a population (or of a particular individual) and potentially help to prevent epidemics, cure disease, cut down costs, etc.

Now that we live longer, treatment models have changed and many of these changes are namely driven by data. Doctors want to understand as much as they can about a patient and as early in their life as possible, to pick up warning signs of serious illness as they arise – treating any disease at an early stage is far more simple and less expensive. With healthcare data analytics, prevention is better than cure and managing to draw a comprehensive picture of a patient will let insurances provide a tailored package. This is the industry’s attempt to tackle the siloes problems a patient’s data has: everywhere are collected bits and bites of it and archived in hospitals, clinics, surgeries, etc., with the impossibility to communicate properly.

Indeed, for years gathering huge amounts of data for medical use has been costly and time-consuming. With today’s always-improving technologies, it becomes easier not only to collect such data but also to convert it into relevant critical insights, that can then be used to provide better care. This is the purpose of healthcare data analytics: using data-driven findings to predict and solve a problem before it is too late, but also assess methods and treatments faster, keep better track of inventory, involve patients more in their own health and empower them with the tools to do so.

Why We Need Big Data Analytics In Healthcare

There’s a huge need for big data in healthcare as well, due to rising costs in nations like the United States. As a McKinsey report states, “After more than 20 years of steady increases, healthcare expenses now represent 17.6 percent of GDP —nearly $600 billion more than the expected benchmark for a nation of the United States’s size and wealth.”

In other words, costs are much higher than they should be, and they have been rising for the past 20 years. Clearly, we are in need of some smart, data-driven thinking in this area. And current incentives are changing as well: many insurance companies are switching from fee-for-service plans (which reward using expensive and sometimes unnecessary treatments and treating large amounts of patients quickly) to plans that prioritize patient outcomes

As the authors of the popular Freakonomics books have argued, financial incentives matter – and incentives that prioritize patients health over treating large amounts of patients are a good thing.

Why does this matter?

Well, in the previous scheme, healthcare providers had no direct incentive to share patient information with one another, which had made it harder to utilize the power of analytics. Now that more of them are getting paid based on patient outcomes, they have a financial incentive to share data that can be used to improve the lives of patients while cutting costs for insurance companies.

Finally, physician decisions are becoming more and more evidence-based, meaning that they rely on large swathes of research and clinical data as opposed to solely their schooling and professional opinion. As in many other industries, data gathering and management is getting bigger, and professionals need help in the matter. This new treatment attitude means there is a greater demand for big data analytics in healthcare facilities than ever before, and the rise of SaaS BI tools is also answering that need.

Obstacles To A Widespread Big Data Healthcare

One of the biggest hurdles standing in the way to use big data in medicine is how medical data is spread across many sources governed by different states, hospitals, and administrative departments. Integration of these data sources would require developing a new infrastructure where all data providers collaborate with each other.

Equally important is implementing new online reporting software and business intelligence strategy. Healthcare needs to catch up with other industries that have already moved from standard regression-based methods to more future-oriented like predictive analytics, machine learning, and graph analytics.

However, there are some glorious instances where it doesn’t lag behind, such as EHRs (especially in the US.) So, even if these services are not your cup of tea, you are a potential patient, and so you should care about new healthcare analytics applications. Besides, it’s good to take a look around sometimes and see how other industries cope with it. They can inspire you to adapt and adopt some good ideas.

12 Big Data Applications In Healthcare

1) Patients Predictions For An Improved Staffing

For our first example of big data in healthcare, we will look at one classic problem that any shift manager faces: how many people do I put on staff at any given time period? If you put on too many workers, you run the risk of having unnecessary labor costs add up. Too few workers, you can have poor customer service outcomes – which can be fatal for patients in that industry.

Big data is helping to solve this problem, at least at a few hospitals in Paris. A Forbes article details how four hospitals which are part of the Assistance Publique-Hôpitaux de Paris have been using data from a variety of sources to come up with daily and hourly predictions of how many patients are expected to be at each hospital.

One of they key data sets is 10 years’ worth of hospital admissions records, which data scientists crunched using “time series analysis” techniques. These analyses allowed the researchers to see relevant patterns in admission rates. Then, they could use machine learning to find the most accurate algorithms that predicted future admissions trends.

Summing up the product of all this work, Forbes states: “The result is a web browser-based interface designed to be used by doctors, nurses and hospital administration staff – untrained in data science – to forecast visit and admission rates for the next 15 days. Extra staff can be drafted in when high numbers of visitors are expected, leading to reduced waiting times for patients and better quality of care.”

2) Electronic Health Records (EHRs)

It’s the most widespread application of big data in medicine. Every patient has his own digital record which includes demographics, medical history, allergies, laboratory test results etc. Records are shared via secure information systems and are available for providers from both public and private sector. Every record is comprised of one modifiable file, which means that doctors can implement changes over time with no paperwork and no danger of data replication.

EHRs can also trigger warnings and reminders when a patient should get a new lab test or track prescriptions to see if a patient has been following doctors’ orders.

Although EHR are a great idea, many countries still struggle to fully implement them. U.S. has made a major leap with 94% of hospitals adopting EHRs according to this HITECH research, but the EU still lags behind. However, an ambitious directive drafted by European Commission is supposed to change it: by 2020 centralized European health record system should become a reality.

Kaiser Permanente is leading the way in the U.S., and could provide a model for the EU to follow. They’ve fully implemented a system called HealthConnect that shares data across all of their facilities and makes it easier to use EHRs. A McKinsey report on big data healthcare states that “The integrated system has improved outcomes in cardiovascular disease and achieved an estimated $1 billion in savings from reduced office visits and lab tests.”

3) Real-Time Alerting

Other examples of big data analytics in healthcare share one crucial functionality – real-time alerting. In hospitals, Clinical Decision Support (CDS) software analyzes medical data on the spot, providing health practitioners with advice as they make prescriptive decisions.

However, doctors want patients to stay away from hospitals to avoid costly in-house treatments. Analytics, already trending as one of the business intelligence buzzwords in 2019, has the potential to become part of a new strategy. Wearables will collect patients’ health data continuously and send this data to the cloud.

Additionally, this information will be accessed to the database on the state of health of the general public, which will allow doctors to compare this data in socioeconomic context and modify the delivery strategies accordingly. Institutions and care managers will use sophisticated tools to monitor this massive data stream and react every time the results will be disturbing.

For example, if a patient’s blood pressure increases alarmingly, the system will send an alert in real time to the doctor who will then take action to reach the patient and administer measures to lower the pressure.

Another example is that of Asthmapolis, which has started to use inhalers with GPS-enabled trackers in order to identify asthma trends both on an individual level and looking at larger populations. This data is being used in conjunction with data from the CDC in order to develop better treatment plans for asthmatics.

4) Enhancing Patient Engagement

Many consumers – and hence, potential patients – already have an interest in smart devices that record every step they take, their heart rates, sleeping habits, etc., on a permanent basis. All this vital information can be coupled with other trackable data to identify potential health risks lurking. A chronic insomnia and an elevated heart rate can signal a risk for future heart disease for instance. Patients are directly involved in the monitoring of their own health, and incentives from health insurances can push them to lead a healthy lifestyle (e.g.: giving money back to people using smart watches).

Another way to do so comes with new wearables under development, tracking specific health trends and relaying them to the cloud where physicians can monitor them. Patients suffering from asthma or blood pressure could benefit from it, and become a bit more independent and reduce unnecessary visits to the doctor.

5) Prevent Opioid Abuse In The US

Our fourth example of big data healthcare is tackling a serious problem in the US. Here’s a sobering fact: as of this year, overdoses from misused opioids have caused more accidental deaths in the U.S. than road accidents, which were previously the most common cause of accidental death.

Analytics expert Bernard Marr writes about the problem in a Forbes article. The situation has gotten so dire that Canada has declared opioid abuse to be a “national health crisis,” and President Obama earmarked $1.1 billion dollars for developing solutions to the issue while he was in office.

Once again, an application of big data analytics in healthcare might be the answer everyone is looking for: data scientists at Blue Cross Blue Shield have started working with analytics experts at Fuzzy Logix to tackle the problem. Using years of insurance and pharmacy data, Fuzzy Logix analysts have been able to identify 742 risk factors that predict with a high degree of accuracy whether someone is at risk for abusing opioids.

As Blue Cross Blue Shield data scientist Brandon Cosley states in the Forbes piece: “It’s not like one thing – ‘he went to the doctor too much’ – is predictive … it’s like ‘well you hit a threshold of going to the doctor and you have certain types of conditions and you go to more than one doctor and live in a certain zip code…’ Those things add up.”

To be fair, reaching out to people identified as “high risk” and preventing them from developing a drug issue is a delicate undertaking. However, this project still offers a lot of hope towards mitigating an issue which is destroying the lives of many people and costing the system a lot of money.

6) Using Health Data For Informed Strategic Planning

The use of big data in healthcare allows for strategic planning thanks to better insights into people’s motivations. Care mangers can analyze check-up results among people in different demographic groups and identify what factors discourage people from taking up treatment.

University of Florida made use of Google Maps and free public health data to prepare heat maps targeted at multiple issues, such as population growth and chronic diseases. Subsequently, academics compared this data with the availability of medical services in most heated areas. The insights gleaned from this allowed them to review their delivery strategy and add more care units to most problematic areas.

7) Big Data Might Just Cure Cancer

Another interesting example of the use of big data in healthcare is the Cancer Moonshot program. Before the end of his second term, President Obama came up with this program that had the goal of accomplishing 10 years’ worth of progress towards curing cancer in half that time.

Medical researchers can use large amounts of data on treatment plans and recovery rates of cancer patients in order to find trends and treatments that have the highest rates of success in the real world. For example, researchers can examine tumor samples in biobanks that are linked up with patient treatment records. Using this data, researchers can see things like how certain mutations and cancer proteins interact with different treatments and find trends that will lead to better patient outcomes.

This data can also lead to unexpected benefits, such as finding that Desipramine, which is an anti-depressant, has the ability to help cure certain types of lung cancer.

However, in order to make these kinds of insights more available, patient databases from different institutions such as hospitals, universities, and nonprofits need to be linked up. Then, for example, researchers could access patient biopsy reports from other institutions. Another potential use case would be genetically sequencing cancer tissue samples from clinical trial patients and making these data available to the wider cancer database.

But, there are a lot of obstacles in the way, including:

Incompatible data systems. This is perhaps the biggest technical challenge, as making these data sets able to interface with each other is quite a feat.

Patient confidentiality issues. There are differing laws state by state which govern what patient information can be released with or without consent, and all of these would have to be navigated.

Simply put, institutions which have put a lot of time and money into developing their own cancer dataset may not be eager to share with others, even though it could lead to a cure much more quickly.

However, as an article by Fast Company states, there are precedents to navigating these types of problems: “…the U.S. National Institutes of Health (NIH) has hooked up with a half-dozen hospitals and universities to form the Undiagnosed Disease Network, which pools data on super-rare conditions (like those with just a half-dozen sufferers), for which every patient record is a treasure to researchers.”

Hopefully, Obama’s panel will be able to navigate the many roadblocks in the way and accelerate progress towards curing cancer using the strength of data analytics.

8) Predictive Analytics In Healthcare

We have already recognized predictive analytics as one of the biggest business intelligence trend two years in a row, but the potential applications reach far beyond business and much further in the future. Optum Labs, an US research collaborative, has collected EHRs of over 30 million patients to create a database for predictive analytics tools that will improve the delivery of care.

The goal of healthcare business intelligence is to help doctors make data-driven decisions within seconds and improve patients’ treatment. This is particularly useful in case of patients with complex medical histories, suffering from multiple conditions. New tools would also be able to predict, for example, who is at risk of diabetes, and thereby be advised to make use of additional screenings or weight management.

9) Reduce Fraud And Enhance Security

Some studies have shown that this particular industry is 200% more likely to experience data breaches than any other industry. The reason is simple: personal data is extremely valuable and profitable on the black markets. And any breach would have dramatic consequences. With that in mind, many organizations started to use analytics to help prevent security threats by identifying changes in network traffic, or any other behavior that reflects a cyber-attack. Of course, big data has inherent security issues and many think that using it will make the organizations more vulnerable than they already are. But advances in security such as encryption technology, firewalls, anti-virus software, etc, answer that need for more security, and the benefits brought largely overtake the risks.

Likewise, it can help prevent fraud and inaccurate claims in a systemic, repeatable way. Analytics help streamline the processing of insurance claims, enabling patients to get better returns on their claims and caregivers are paid faster. For instance, the Centers for Medicare and Medicaid Services said they saved over $210.7 million in frauds in just a year.

10) Telemedicine

Telemedicine has been present on the market for over 40 years, but only today, with the arrival of online video conferences, smartphones, wireless devices, and wearables, has it been able to come into full bloom. The term refers to delivery of remote clinical services using technology.

It is used for primary consultations and initial diagnosis, remote patient monitoring, and medical education for health professionals. Some more specific uses include telesurgery – doctors can perform operations with the use of robots and high-speed real-time data delivery without physically being in the same location with a patient.

Clinicians use telemedicine to provide personalized treatment plans and prevent hospitalization or re-admission. Such use of healthcare data analytics can be linked to the use of predictive analytics as seen previously. It allows clinicians to predict acute medical events in advance and prevent deterioration of patient’s conditions.

By keeping patients away from hospitals, telemedicine helps to reduce costs and improve the quality of service. Patients can avoid waiting lines and doctors don’t waste time for unnecessary consultations and paperwork. Telemedicine also improves the availability of care as patients’ state can be monitored and consulted anywhere and anytime.

11) Integrating Big Data With Medical Imaging

Medical imaging is vital and each year in the US about 600 million imaging procedures are performed. Analyzing and storing manually these images is expensive both in terms of time and money, as radiologists need to examine each image individually, while hospitals need to store them for several years.

Medical imaging provider Carestream explains how big data analytics for healthcare could change the way images are read: algorithms developed analyzing hundreds of thousands of images could identify specific patterns in the pixels and convert it into a number to help the physician with the diagnosis. They even go further, saying that it could be possible that radiologists will no longer need to look at the images, but instead analyze the outcomes of the algorithms that will inevitably study and remember more images than they could in a lifetime. This would undoubtedly impact the role of radiologists, their education and required skillset.

12) A Way To Prevent Unnecessary ER Visits

Saving time, money and energy using big data analytics for healthcare is necessary. What if we told you that over the course of 3 years, one woman visited the ER more than 900 times? That situation is a reality in Oakland, California, where a woman who suffers from mental illness and substance abuse went to a variety of local hospitals on an almost daily basis.

This woman’s issues were exacerbated by the lack of shared medical records between local emergency rooms, increasing the cost to taxpayers and hospitals, and making it harder for this woman to get good care. As Tracy Schrider, who coordinates the care management program at Alta Bates Summit Medical Center in Oakland stated in a Kaiser Health News article:

“Everybody meant well. But she was being referred to three different substance abuse clinics and two different mental health clinics, and she had two case management workers both working on housing. It was not only bad for the patient, it was also a waste of precious resources for both hospitals.”

In order to prevent future situations like this from happening, Alameda county hospitals came together to create a program called PreManage ED, which shares patient records between emergency departments.

This system lets ER staff know things like:

If the patient they are treating has already had certain tests done at other hospitals, and what the results of those tests are

If the patient in question already has a case manager at another hospital, preventing unnecessary assignments

What advice has already been given to the patient, so that, a coherent message to the patient can be maintained by providers

This is another great example where the application of healthcare analytics is useful and needed. In the past, hospitals without PreManage ED would repeat tests over and over, and even if they could see that a test had been done at another hospital, they would have to go old school and request or send a long fax just to get the information they needed.

How To Use Big Data In Healthcare

All in all, we’ve seen through these 12 examples of big data application in healthcare three main trends: the patients experience could improve dramatically, including quality of treatment and satisfaction; the overall health of the population should also be improved over time; and the general costs should be reduced. Let’s have a look now at a concrete example of how to use data analytics in healthcare, in a hospital for instance:

This healthcare dashboard provides you with the overview needed as a hospital director or as a facility manager. Gathering in one central point all the data on every division of the hospital, the attendance, its nature, the costs incurred, etc., you have the big picture of your facility, which will be of a great help to run it smoothly.

You can see here the most important metrics concerning various aspects: the number of patients that were welcomed in your facility, how long they stayed and where, how much it cost to treat them, and the average waiting time in emergency rooms. Such a holistic view helps top-management identify potential bottlenecks, spot trends and patterns over time, and in general assess the situation. This is key in order to make better-informed decisions that will improve the overall operations performance, with the goal of treating patients better and having the right staffing resources.

Our List of 12 Big Data Examples In Healthcare

The industry is changing, and like any other, big data is starting to transform it – but there is still a lot of work to be done. The sector slowly adopts the new technologies that will push it into the future, helping it to make better-informed decisions, improving operations, etc. In a nutshell, here’s a short list of the examples we have gone over in this article. With healthcare data analytics, you can:

Predict the daily patients income to tailor staffing accordingly

Use Electronic Health Records (EHRs)

Use real-time alerting for instant care

Help in preventing opioid abuse in the US

Enhance patient engagement in their own health

Use health data for a better-informed strategic planning

Research more extensively to cure cancer

Use predictive analytics

Reduce fraud and enhance data security

Practice telemedicine

Integrate medical imaging for an broader diagnosis

Prevent unnecessary ER visits

These 12 examples of big data in healthcare prove that the development of medical applications of data should be the apple in the eye of data science, as they have the potential to save money and most importantly, people’s lives. Already today it allows for early identification of illnesses of individual patients and socioeconomic groups and taking preventive actions because, as we all know, prevention is better than cure.

Applications of Big Data in Manufacturing and Natural Resources

Increasing demand for natural resources including oil, agricultural products, minerals, gas, metals, and so on has led to an increase in the volume, complexity, and velocity of data that is a challenge to handle.

Similarly, large volumes of data from the manufacturing industry are untouched. If we properly utilize this data, we can get improved quality of products, energy efficiency, reliability, and better profit margins.